Training assessments are the key to understanding. Whether you work in healthcare, public health, schools, businesses, or other organizations, they’re the best way to evaluate learner progress. They can help you and your facilitators identify areas where people need extra support in their training—and where your efforts are succeeding

Feedback is one of the best ways you can enhance your program, whether it’s an assessment in a training program or a survey aimed at your community members as part of user research.

When you ask your learners to complete a survey or assessment, you can learn the opinions, perspectives, and judgments of your practices and programs directly from the people who use them.

You should have clear objectives of what you want to learn from participants before deciding what types of questions you want to use. When you can define those goals, you can begin to choose what types of questions will yield appropriate data.

This article will introduce you to the most common types of questions used in assessments and surveys and help you decide which will get you the feedback you want. We’ve included examples and suggestions to help you create your own.

Understanding the Types of Assessment

There are two main types of training assessments: formative and summative.

Formative assessments help educators understand what’s going well and what needs improvement.

Using formative assessment lets you give feedback right away during training so learners can keep learning. These eek out strengths, weaknesses and areas for improvement.

Examples are asking someone to summarize what they just learned in a couple of sentences or asking a quick knowledge-check question.

On the flip side, summative assessments provide data that can be used to make decisions about future instruction. These assessments are usually used as a benchmark at the end of a course.

Examples are what you probably think about when you think of post-assessments or final projects.

Hybrid learning is here to stay. This e-course covers everything you need to know about how to prepare for a hybrid learning project, from earning buy-in to the must-have elements for successful virtual collaboration. Access it here.

Types of Questions Used in Training Assessments

In the question hierarchy, there are two types:

- Close-ended questions that produce quantitative data. These can be answered with a “yes” or “no.” They can also have limited answers, as in a multiple-choice quiz.

- Open-ended questions that produce qualitative data. These allow someone to give freeform responses, usually in a sentence, paragraph, or longer.

Open-ended questions are helpful when you don’t want to influence the kind of response you’re looking to collect. You might use these when doing research into a community’s needs or asking for suggestions.

When testing learners in assessments, prioritize close-ended survey questions. They produce the most manageable results. You will also learn where open-ended questions fit in your survey design.

Close-ended Survey Questions

Close-ended survey questions provide a fixed set of options. Data from close-ended questions is quantitative and can be calculated into figures like percentages, statistics, or scores.

In training assessments, common close-ended questions include:

- multiple-choice questions

- rating scale questions

- Likert scale questions

- semantic differential questions

- ranking questions

- dichotomous questions

Read on for more information about each type of question and examples.

Multiple-choice Questions

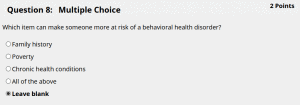

Multiple-choice questions are questions with a pool of answers. They produce clean data for you to analyze and are easiest for participants to complete. Here’s an example from a behavioral health course:

This type of question requires students to think critically and analyze the material. It also gives instructors the chance to see where students need more practice.

When creating multiple-choice questions, it is important to create a direct and simple question with a comprehensive set of answers. Do your best to create answers that cover all options but do not overlap with one another.

One way to avoid biased results and limitations in your answer set is to give the respondent an “Other” option. Although adding an option for “Other” may not allow the data to be as neat, participants can offer a perspective you have not considered.

Another consideration when developing multiple-choice questions is deciding whether you want a single answer or allow multiple answers.

Multiple choice = only one correct option

Multiple answer = more than one correct option

Use single-answer questions when you want the respondent to make one choice. You may only want a single-answer because you want the participant to make a decision or because there is only one answer to choose like age. In general, multiple-choice questions are effective for collecting demographic information.

Use multiple-answer questions when you want participants to select all answers that apply. For instance, participants can select more than one service or product they use.

The example above could be rephrased as a multiple-answer question this way:

Choose all of the examples of items that can make someone more at risk of a behavioral health disorder.

Rating scales

Rating scale questions allow participants to rate or assign weight to an answer choice. Use rating scale questions when you want to learn what a respondent thinks or feels across a scale.

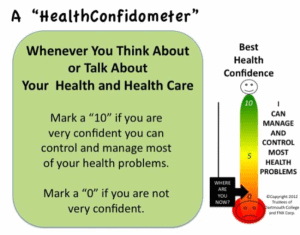

An example of this is a confidence scale about health self-management:

Source: Howsyourhealth.org

When using a rating scale question, you are asking the respondent to measure where their response lies a scale like 0 to 10, 1 to 5, or 0 to 100. You might ask the participant to rate their happiness, satisfaction, likeliness to do something, and experience with your organization.

Rating scale questions can be an effective tool to evaluate change, growth, or progress over time.

For example, if you utilize them as an assessment tool at the beginning of a new program, you can use the same question later or at a final stage of a program to measure changes in sentiment among participants.

When designing the rating scale question, the scale must clearly label the difference in relationship between the numbers. If you choose a rating scale between 0 and 10, what will 0 represent and what will 10 represent?

Likert scales

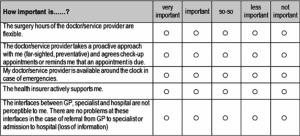

A Likert scale measures a participant’s agreement with a question or statement. They are useful for measuring attitudes and behaviors because they ask the respondent to select how much they agree or disagree.

Here’s an example from a quantitative patient survey:

A Likert scale question is a type of rating scale question, but it specifically labels each answer with a level of agreement or likelihood from “strongly disagree” to “strongly agree” or “not at all likely” to “highly likely.”

Use Likert scale questions to measure participant attitudes, opinions, and beliefs and use the 5- or 7-point scale with clear labels.

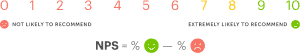

Semantic differential questions

A semantic differential question is another tool for rating and understanding opinions and attitudes. It asks participants to rate something on a scale between two opposing statements, and emphasizes two opposite adjectives at each end.

You see these questions in customer satisfaction surveys, as in the Net Promoter Score:

Semantic differential questions help you gather multiple impressions about one subject area.

For instance, you would define polar opposite statements like strong-weak, love-hate, exceptional-terrible, expensive-cheap, likely to return-unlikely to return, or satisfied-unsatisfied and include multi-point options in between.

You can acquire multiple attitudes about a service like “What is your impression of Service A?” and have options rate between “Hard to Use” to “Easy to Use,” “Weak” to “Strong,” and “Cheap” to “High Quality,” all to learn more about Service A.

Use semantic differential questions when you want to collect many opinions in one question.

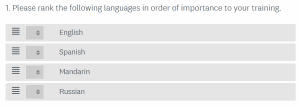

Ranking Questions

This type of question lets participants compare list items with another and assign an order of preference to them.

Ranking question data will show the level of priority or importance multiple items have to the participant. But will not offer insight into “why.”

If you want to look closely at individual respondent preference, then a ranking question is appropriate because it shows the relationship of how much they prefer one option to another. This can be more difficult when analyzing large sets of data because instead of learning how much more an item is preferred to another, the data would offer insight into what item is generally preferred.

Use ranking scale questions to learn what your participant values and prioritizes most.

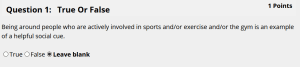

Dichotomous Questions

Dichotomous survey questions give respondents two answers. For example, Yes/No and True/False.

These questions are simple and quick for respondents to answer, and they offer you clean data to analyze. The weakness of dichotomous questions is there is no room for preference. This leaves you little to interpret your training assessments’ results.

Use a dichotomous question if you want a participant to make a strict decision.

Open-ended Questions

Instead of giving participants a set of answers to choose from, open-ended questions allow participants to respond in their own words in a text box. We use these in evaluation surveys often.

The data from open-ended questions is qualitative and less focused on measurement.

Open-ended question responses offer insight into learner impressions, opinions, motivations, challenges, and attitudes. They do take a level of interpretation on your end. And it may put their answer in context with any information provided about themselves and their other survey selections.

Open-ended questions and qualitative data require more attention in training assessments. But they can fill gaps in the story of what your survey wants to learn. You can pair an open-ended question with a close-ended question to understand more about their decision: “What is the reason for your selection/score/ranking?” You may also include them independently: “How do you think you can improve as a business?”

The general advice is to use open-ended questions sparingly and strategically.

Like creating any kind of educational or survey content, coming up with effective and clear assessments takes practice. Great training content is always a work in progress.